Meta Codec Avatars Now Have Very Photorealistic Clothing

Meta’s Codec Avatars already have very convincing faces. Now they are about to get very photorealistic VR clothing thanks to new research work.

Meta has shared details of its new research on the photorealistic simulation of clothing for its Codec Avatars.

The new photorealistic clothing solution has been built on the Codec Avatars. The Codec Avatars are Meta’s long-term and ongoing research project on the creation of realistic-looking avatars that are powered by VR hardware sensors in real-time. Research on realistic VR clothing is done by the same team behind the Codec Avatars.

Rather than use traditional rendering, the Codec Avatars deploy a series of neural networks that learn the appearance of a person and then, based on sensor input, constantly update the current state before decoding the state as geometry and the eventual output textures. The Codec Avatars system has evolved severally following its initial presentation. For instance, the team had developed more realistic eyes and there is a version that requires eye tracking and microphone input. There is also a 2.0 version that comes close to complete realism. There is also a generation of Codec Avatars that uses only iPhone that has FaceID.

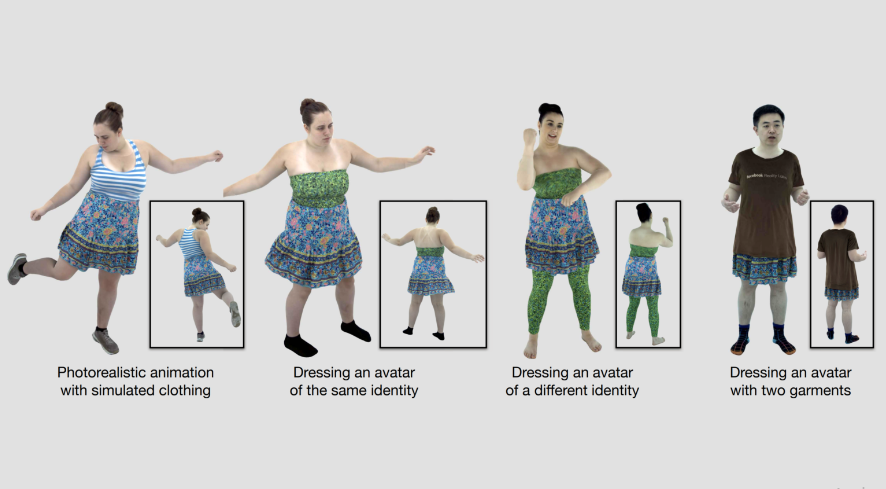

The latest research on the outfits for the Codec Avatars is titled Dressing Avatars: Deep Photorealistic Appearance for Physically Simulated Clothing. It applies neural networks to the realistic deformation of clothes for any body type.

In the research published by Donglai Xiang and other co-authors, Artificial Intelligence is once again taking center stage. The neural network calculates how, for instance, a loose dress falls or deforms on different body shapes when the person is turning.

The new clothing system is computed separately and runs parallel to the usual Codec Avatars system.

The visual impression of the virtual clothing for the Codec Avatars is enriched via effects, shadows, and ambient occlusion.

The system freely adjusts the sizes of the virtual garments. The software is even capable of layering different types of fabrics.

The presentation video shows that the system can even make an oversized t-shirt to realistically glide over a dress.

Computing the new clothing effects on a realistic Codec Avatar requires a powerful workstation with powerful graphic so the system is still too elaborate for the typical VR headset like the Meta Quest 2. In one instance in the research, GeForce RTX 3090s were used to power the simulation with a 30fps framerate. However, it is possible the research team could introduce machine learning optimizations that would make the system render-able in a headset. The current object recognition and speech synthesis algorithms in smartphones once needed powerful PC GPUs to run.

The current Meta Avatars are available in a very basic cartoony style. The realism of the avatars has actually been going down over time so as to accommodate larger groups in complex environments in apps such as Horizon Worlds that runs on Quest 2’s mobile processor.

Meta’s Codec Avatars could be launched as a separate option and may not necessarily be a direct addition and update to Meta’s current cartoon avatars. In a past interview with Lex Fridman, Zuckerberg described a scenario where users could use “expressionist” avatars in casual games applications and deploy their “realistic” avatars in more serious use-cases such as in work meetings.

However, the system won’t be released any time soon. Yaser Sheikh, research director in the project, stated in May that metaverse telephony was still “five miracles” away from realization and market launch. Metaverse telephony could one day be used in AR headsets to render people as realistic holograms in a real-world environment.

https://virtualrealitytimes.com/2022/07/22/meta-codec-avatars-now-have-very-photorealistic-clothing/https://virtualrealitytimes.com/wp-content/uploads/2022/07/Meta-Codec-Avatars-Clothing-Research-600x331.pnghttps://virtualrealitytimes.com/wp-content/uploads/2022/07/Meta-Codec-Avatars-Clothing-Research-150x90.pngTechnologyMeta’s Codec Avatars already have very convincing faces. Now they are about to get very photorealistic VR clothing thanks to new research work. Meta has shared details of its new research on the photorealistic simulation of clothing for its Codec Avatars. The new photorealistic clothing solution has been built on the...Rob GrantRob Grant[email protected]AuthorVirtual Reality Times - Metaverse & VR