Meta Introduces AR Engine for Everyone

Meta has developed a new augmented reality engine that enables developers to easily create engaging AR experiences that can run on just about any device. The new AR engine will provide creators with the core technologies required to create augmented reality experiences that could potentially reach millions of users.

The new Meta Augmented Reality tools are unique as they can be used on just about any device, and not just the more sophisticated XR devices.

Meta recently unveiled its Augmented Reality (AR) toolkit designed to simplify cross-platform development. Through the toolkit, Meta aims to make it easier for developers to build augmented reality experiences capable of running on smartphones, VR/XR headsets, or computers without the need to ‘port’ or redesign for every platform.

The new Meta AR engine could also usher in future advanced augmented reality glasses such as Meta’s Project Nazare.

AR hardware is currently in the form of different devices all of which have different capabilities. Screens can include a hand-held smartphone delivering a small and moveable window on the augmented reality view, laptop screens or computer monitors that have larger views in a fixed position, and 360-degree immersive experiences in virtual reality headsets.

Meta says that to democratize access to AR experiences, it is focusing on performance optimizations. Meta has one of the biggest augmented reality platforms in the world and gives billions of users access to its apps. Meta’s ecosystem also provides a platform for hundreds of thousands of creators.

Meta currently has unique AR tools that are applicable in a multiplicity of devices including phones and mixed reality headsets such as the Meta Quest Pro. The AR tools are also available in other low-tech devices that are commonly used in parts of the world with low connectivity.

Meta says many teams within the company are keen on creating augmented reality experiences but this needs different pieces of technology. For instance, tech for managing an input device like input from the camera or for managing and using computer vision tracking for anchoring things on the face, body, or the environment.

There is also the need for advanced rendering for transmitting quality imagery on a variety of edge devices that have different hardware.

Meta says its team is constantly seeking to avoid the overheads associated with building all that technology from scratch. That is where its AR engine comes in as it gives creators and developers the core technologies they need to build augmented reality experiences.

AR Engine to Unlock Creativity

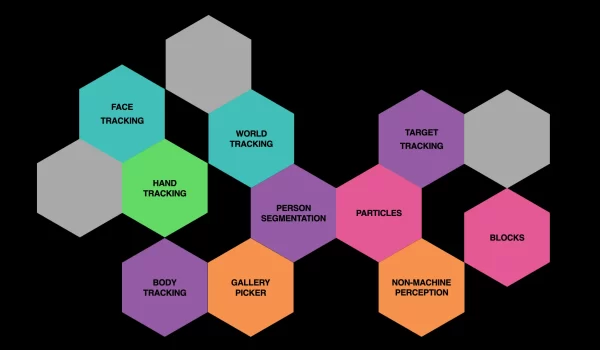

Building for Augmented Reality provides plenty of opportunities for developers to explore their creative side. Meta says providing developers with a number of AR capabilities as building blocks enable them to unlock their creativity.

According to Meta, these creative abilities can either be people-centric or world-centric. The people-centric creative capabilities leverage people tracking in anchoring things on the person. An example of this is the use of iris tracking in controlling the game.

The world-centric capabilities overlay content on the real-world using features such as target tracking and plane tracking. Target tracking can, for instance, be used in creating a QR code greeting card that displays unique animation and custom text to someone.

Apart from the computer vision capabilities, there are also those where a camera or computer vision is not required and, instead, use audio. Meta’s platform can, can, for instance, be used in building digital content capable of responding to music beats or that enables you to change your voice when recording audio.

Meta says it is providing creators with the option of mixing and matching the many capabilities to their heart’s content. Meta wants to make these capabilities device- and platform-agnostic where feasible for maximum flexibility when it comes to customizing content to suit the targeted form factor.

Increasing the Reach of AR Experiences

Meta says that it does a lot of stuff “behind the scenes” to ensure augmented reality experiences reach the widest possible audiences.

This begins with server-side optimization such as asset optimizations like the removal of empty pixels or comments from scripts, the compression of assets for the target device, and the adjustment of the packaging into multiple ones or a larger file. These behind-the-scenes adjustments enable Meta to support varied connection speeds in the delivery of the assets.

Meta says it is now optimizing the runtime, including the preloading assets and prerendering content just like in the modern game engines. However, Meta says it is taking this much further in all components.

One of the leading performance hogs in Meta’s system is tracking and Meta says it can improve on this through the parallelization of operations which will allow for a delicate balance between running inference on the most current data without sacrificing the fluidness and smoothness of the visual aspects in an experience.

There has been a huge leap from the estimation of the hardware performance and the ability to optimize ahead of time, generally. If one knows how particular hardware performs, then this can guide the selection of both higher- and lower-precision tracking models along with the selection of assets with different fidelity for the performance gains.

The performance of a fast computer far outdoes that of the most advanced smartphones. It is therefore a challenge to scale up tracking, the graphics resolution as well as special effects quality to make it match the processing power and internet bandwidth of each of the devices.

While future XR devices will be much faster, Meta still wants developers to work on augmented reality solutions with current technologies so that the ecosystem will be ready when augmented reality hardware hits its breakthrough moment.

Meta wants to smoothen the hardware challenges so that developers can work on their creative inspirations without grappling with too many technical challenges. The AR engine aims to bridge that gap and simplify the process of creating AR experiences.

https://virtualrealitytimes.com/2023/02/16/meta-introduces-ar-engine-for-everyone/https://virtualrealitytimes.com/wp-content/uploads/2023/02/Different-Capabilities-600x350.webphttps://virtualrealitytimes.com/wp-content/uploads/2023/02/Different-Capabilities-150x90.webpTechnologyMeta has developed a new augmented reality engine that enables developers to easily create engaging AR experiences that can run on just about any device. The new AR engine will provide creators with the core technologies required to create augmented reality experiences that could potentially reach millions of users. The...Rob GrantRob Grant[email protected]AuthorVirtual Reality Times - Metaverse & VR